RPC vs APIs vs Data Infrastructure: What Engineering Teams Actually Need to Build Onchain

Technical guide comparing RPC nodes, API providers, and data infrastructure for blockchain data. Learn what production systems actually require with real examples from wallet and trading applications.

A technical guide for data engineering teams evaluating how to access blockchain data at scale

Most data engineering teams building on blockchain go through the same evaluation process.

They need wallet balances, transaction histories, DEX trades, or NFT metadata. The obvious starting points are RPC nodes or blockchain APIs. Both seem straightforward - call an endpoint, get data back. But teams building production systems quickly discover these approaches have fundamental limitations that don't show up until you're trying to scale.

This guide breaks down when RPC nodes and API providers make sense, where they break down, and what production-ready blockchain data infrastructure actually requires. We'll focus on two common use cases: consumer wallets and trading applications.

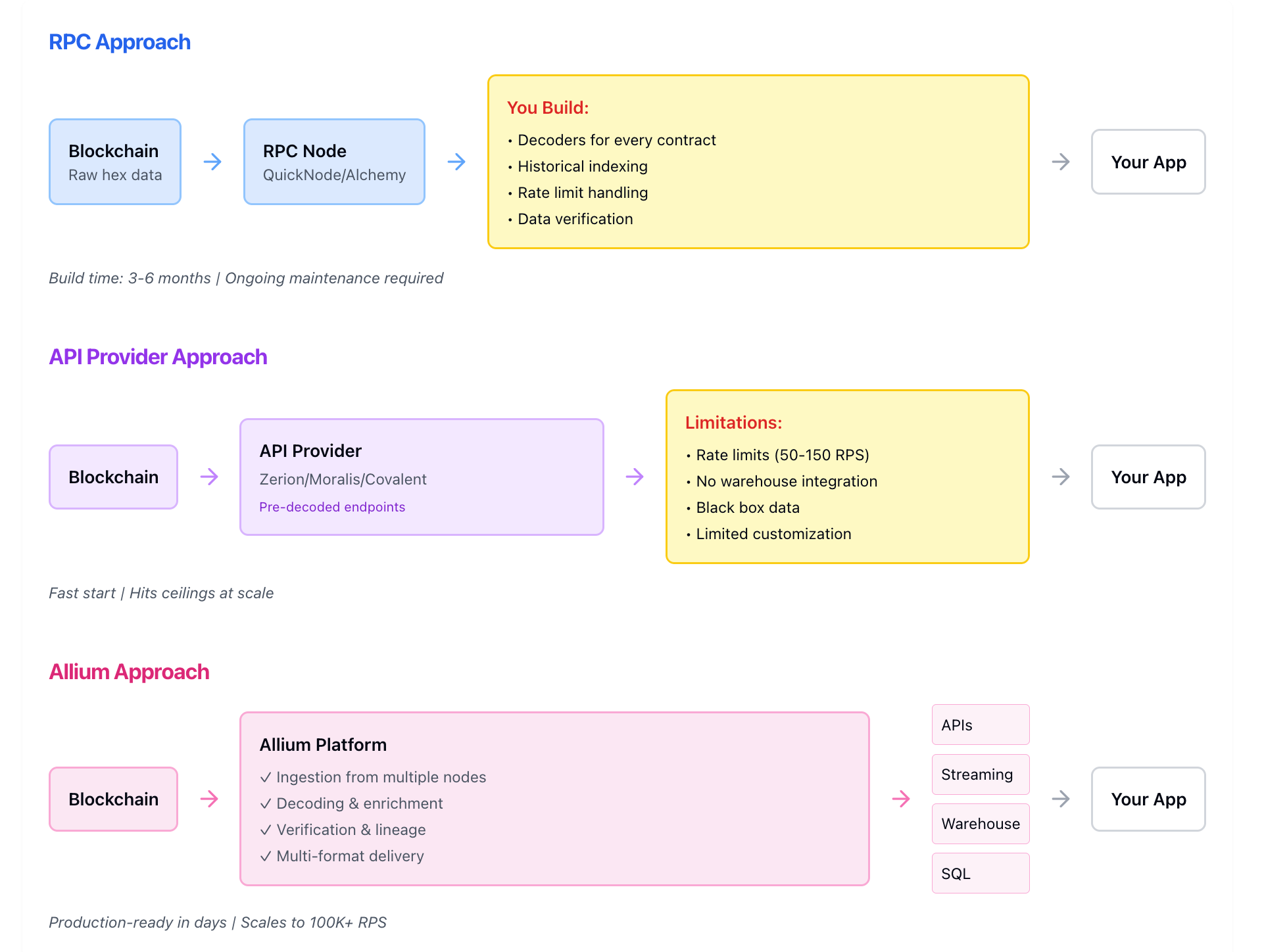

Why Teams Start With RPC Nodes

RPC (Remote Procedure Call) nodes are the most direct way to access blockchain data. You connect to an endpoint like QuickNode or Alchemy and query the blockchain directly. For sending transactions or reading the latest block, this works perfectly.

The appeal is obvious: it's blockchain-native infrastructure. You're querying the source of truth directly. No intermediaries, no abstraction layers.

Where RPC Works Well:

- Transaction submission (writing to the blockchain)

- Mempool monitoring (watching pending transactions)

- Real-time block propagation (sub-second latency for MEV/arbitrage)

- Early prototyping and testing

If you're building an application that primarily writes to the blockchain, RPC providers are the right choice.

Where RPC Breaks Down for Data Consumption

The problems start when teams try using RPC for data queries - the kind that power wallet interfaces, trading dashboards, or analytics systems.

Problem 1: Raw Hex Data Requires Extensive Decoding

RPC returns raw transaction data as hexadecimal strings. Before you can use this data, you need to:

- Build decoders for every smart contract ABI you care about

- Maintain those decoders as protocols upgrade their contracts

- Handle edge cases: failed transactions, chain reorganizations, encoding variations

- Keep hiring engineers just to maintain the decoding pipeline

For a wallet showing ERC-20 transfers, you're decoding logs for hundreds of token contracts. For a DEX aggregator, you're decoding swaps across Uniswap, Curve, Balancer, and dozens of others. Each protocol has different contract structures. Each upgrade potentially breaks your decoders.

Real cost: 2-3 data engineers building and maintaining decoders = $40-60K/month in loaded costs.

Problem 2: Historical Queries Are Prohibitively Slow

Need to show a user's transaction history from the past 6 months? Via RPC, you query block-by-block, parse each transaction, and reconstruct state. For Ethereum (8 million blocks per year), this takes hours.

The same problem applies to any historical query:

- Wallet balances at a specific past date

- Token transfer history for an address

- DEX trading volume over time

- NFT ownership history

RPC nodes aren't designed for this. They're optimized for reading current state, not historical analysis.

Problem 3: Credit Systems Create Unpredictable Costs

RPC providers charge using compute units or credits, with multipliers based on query complexity:

- Simple call: 1 unit

- Archive node query: 120 units

Your bill can spike 3-5x when usage patterns change.

Problem 4: Rate Limits Block Scale

Free tiers cap at 2-50 requests per second. Paid plans top out at 50-300 RPS. For a consumer wallet during a token airdrop or a trading app during market volatility, you hit these limits immediately.

Why Teams Try API Providers

After hitting RPC limitations, teams often move to blockchain API providers like Zerion, Moralis, or Covalent. These services abstract away the complexity - instead of raw hex, you get JSON responses with decoded data.

What API Providers Solve:

- Pre-decoded data for common use cases (balances, transactions, prices)

- Faster time-to-market than building your own decoders

- Standard REST endpoints developers know how to use

For early-stage products or MVPs, this is often the right move.

Where API Providers Hit Ceilings

API providers work well initially, but teams building production systems run into three core limitations:

Limitation 1: Black Box Data With No Verification

API providers aggregate data from various sources but don't expose their methodology. When discrepancies appear (and they will - different providers show different balances for the same wallet), you have no way to verify which is correct.

For a trading application, incorrect balance data means wrong position sizes. For a wallet, it means user trust issues when their portfolio value is different than what other apps show. For compliance teams, it means you can't audit where numbers came from.

Limitation 2: Performance Caps That Don't Scale

Rate limits remain a problem:

- Moralis paid plans: 50 RPS hard cap

- Zerion: 150 RPS default, up to 2K on request

- Covalent: 50 RPS; API calls fail after credit limit (no autoscaling)

Limitation 3: API-Only Delivery Limits Flexibility

API providers give you REST endpoints. If you need:

- Data in your warehouse (Snowflake, BigQuery) for custom analytics

- Real-time streaming for continuous updates

- SQL access for ad-hoc queries

- Integration with your existing BI tools

You're building those pipelines yourself. The API becomes just another data source you have to ETL.

What Production-Ready Blockchain Data Actually Requires

After working with hundreds of teams building on blockchain, certain requirements consistently separate prototypes from production systems:

1. Multiple Delivery Methods

Different parts of your stack need data in different formats:

- APIs for user-facing queries (wallet balances, transaction history)

- Streaming (Kafka, Pub/Sub) for real-time event processing

- Warehouse tables (Snowflake, BigQuery) for analytics and reporting

- SQL access for ad-hoc analysis and data exploration

Production systems need all of these. API-only or streaming-only forces you to build the rest yourself.

2. Verified Data With Audit Trails

When leadership asks "are these numbers correct?" or regulators request data provenance, you need answers. Production data infrastructure includes:

- Multi-source reconciliation (comparing data from multiple node providers)

- Verification at every processing stage

- Audit trails showing data lineage

- Documented methodology for calculated metrics

3. Performance That Scales

Your traffic patterns aren't predictable. Token airdrops, market volatility, viral features - all create sudden load spikes. Production infrastructure handles:

- 100K+ requests per second without throttling

- Sub-second query latency even during peak load

- Automatic scaling without manual intervention

- No rate limits that block your growth

4. Predictable Economics

Credit systems and compute unit pricing make cost forecasting impossible. Production budgets require knowing what you'll pay as you scale. That means:

- Flat per-chain pricing with no method multipliers

- No surprise overages when usage increases

- Enterprise SLAs without $150K+ minimums

- One contract for your entire data stack

Use Case: Building a Consumer Wallet

Consumer wallets like Phantom, MetaMask, or Rainbow need several data operations:

Real-Time Balance Queries Users open the app expecting to see current holdings instantly. This requires:

- Multi-chain balance aggregation (tokens across Ethereum, Polygon, Solana, etc.)

- Price data to show USD values

- Token metadata (names, logos, decimals)

- Sub-second response times

Via RPC: You query each chain separately, decode ERC-20 logs, fetch prices from another source, handle edge cases (spam tokens, incorrect decimals), aggregate results. Build time: months. Maintenance: ongoing.

Via API Providers: Pre-built balance endpoints work initially. You hit rate limits at scale. During the Jupiter airdrop, Phantom needed ~100K RPS for balance queries - impossible via API providers with 50-2K RPS caps.

Via Allium's data infrastructure: Pre-indexed balances across all chains with prices included, accessible via API. Sub-second latency with no decoding or rate limits.

Transaction History and Activity Feeds Showing users their recent transactions requires:

- Decoded transaction data (not raw hex)

- Context about what happened (swap, transfer, NFT mint)

- Counterparty information when relevant

- Pagination for long histories

Via RPC: Query every block since the wallet's first transaction, parse logs, decode each interaction. For active wallets with thousands of transactions, this is hours of processing.

Via API Providers: Pre-built transaction endpoints return decoded data. Works for recent history. Historical queries (6+ months) either timeout or cost prohibitively.

Via Allium's data infrastructure: All historical data already indexed. Query any time range instantly. Full context and enrichment included.

Portfolio Tracking and P&L Users want to see how their holdings have changed over time and calculate gains/losses. This requires:

- Historical balance snapshots

- Historical price data aligned with balance snapshots

- Cost basis calculations

- P&L across multiple chains and tokens

Via RPC: Impossible. You'd need to replay every transaction since genesis to reconstruct historical balances.

Via API Providers: Some offer holdings history endpoints. Coverage varies. Data verification is limited. Cannot easily customize for specific tax rules or accounting methods.

Via Allium's data infrastructure: Historical balances and prices already computed. Query any date range. Export to warehouse for custom P&L calculations.

Use Case: Building a Trading Application

Trading applications (DEX aggregators, analytics platforms, portfolio managers) have different requirements:

Real-Time DEX Data Showing live trading activity across decentralized exchanges requires:

- Decoded swap events from all major DEXes

- Token prices at trade execution

- Liquidity pool information

- Sub-second data freshness

Via RPC: You build decoders for Uniswap, Curve, Balancer, and every other DEX. Maintain those as protocols upgrade. Query continuously for new swaps. Miss events during connection failures.

Via API Providers: Pre-built DEX endpoints provide decoded trades. Rate limits constrain real-time updates. During high volatility, you're throttled exactly when data matters most.

Via Allium's data infrastructure: Streaming data feeds push decoded DEX trades in real-time via Kafka or Pub/Sub. No rate limits. Guaranteed delivery with replay capability if you miss events.

Historical Analysis and Backtesting Trading strategies require analyzing historical data:

- All DEX trades over time

- Historical liquidity depth

- Price impact analysis

- Volume trends and patterns

Via RPC: Query block-by-block from genesis. Decode millions of swap events. Reconstruct liquidity pools at every block. Processing time: days to weeks.

Via API Providers: Limited historical depth. Queries timeout on large date ranges. Cannot export to warehouse for custom analysis.

Via Allium's data infrastructure: Complete historical data already indexed in warehouse. Run SQL queries across years of data in seconds. Export to Jupyter notebooks or BI tools for analysis.

Custom Metrics and Alerting Trading teams need custom calculations specific to their strategies:

- Unusual volume patterns

- Liquidity changes above thresholds

- Price movements across chains

- Arbitrage opportunities

Via RPC: You build custom monitoring systems on top of raw node data. Maintain infrastructure to process continuous streams. Handle edge cases and data gaps.

Via API Providers: Locked into their pre-built metrics. Cannot customize beyond what their endpoints provide. No streaming infrastructure for custom alerts.

Via Allium's data infrastructure: Stream raw or enriched data into your processing systems. Build custom metrics using tools like Apache Beam or Flink. SQL access for ad-hoc metric development. Full flexibility while avoiding infrastructure maintenance.

The Complementary Approach: Using the Right Tool for Each Job

The best architecture isn't choosing one approach - it's using the right tool for each requirement.

Continue Using RPC For:

- Transaction submission (sending txs to blockchain)

- Mempool monitoring (watching pending transactions)

- Real-time block propagation (sub-second MEV, arbitrage)

Use data infrastructure like Allium for:

- Historical queries (anything beyond recent blocks)

- Multi-chain aggregation (balances, transactions across networks)

- Analytics and reporting (dashboards, metrics, insights)

- Warehouse integration (blockchain data in Snowflake/BigQuery)

- Decoded data at scale (don't build/maintain decoders)

- Compliance and auditing (verified data with lineage)

This complementary pattern shows up across production systems. Wallets use RPC for transaction submission and data infrastructure for balance queries at scale. DEXs uses both for different parts of their stack.

The key insight: RPC and data infrastructure aren't competing - they're solving different problems.

Total Cost Comparison

When evaluating options, calculate total cost to get usable data in production, not just the sticker price of one component.

RPC Approach:

- RPC bill: $5-9K/month (after hitting rate limits on $299 base plan)

- Engineering: 2-3 engineers building decoders and pipelines = $40-60K/month

- Infrastructure: ETL systems, databases, monitoring = $20K/month

- Total: $70-90K/month

API Provider Approach:

- API bill: $1-5K/month (scales with usage and chains)

- Engineering: Building warehouse pipelines, custom metrics = $20-40K/month

- Infrastructure: ETL, analytics databases = $15K/month

- Total: $40-60K/month

Data Infrastructure like Allium:

- Platform fee: $10-20K/month all-in

- Includes: RPC ingestion, decoding, enrichment, APIs, streaming, warehouse delivery, verification

- Engineering: Focus on product features, not data plumbing

- Total: $10-20K/month

The data infrastructure approach is often cheaper once you account for engineering time and infrastructure costs. More importantly, it's predictable - no surprise overages, no scope creep as you add chains or scale usage.

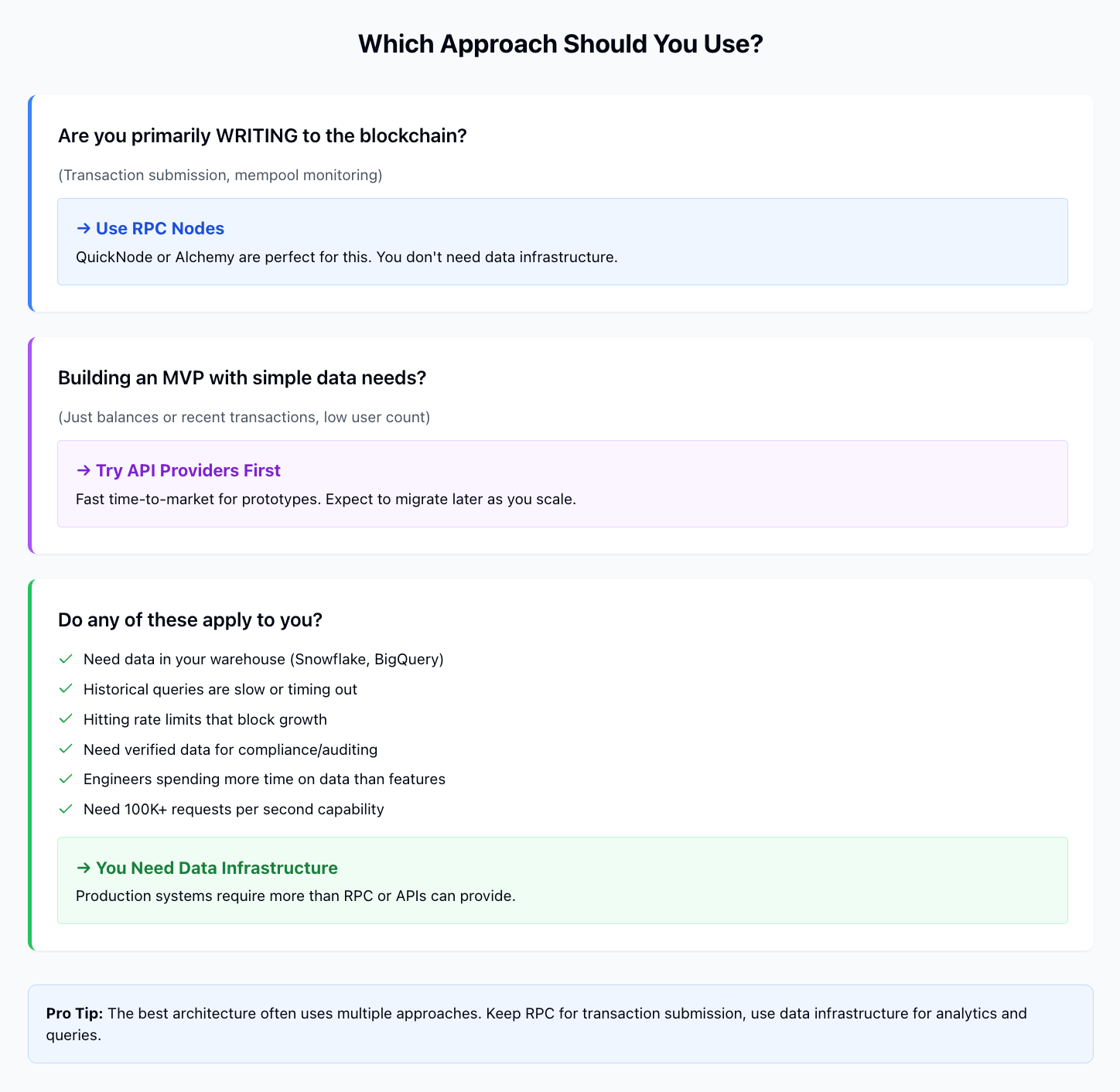

Making the Right Choice for Your Team

The right approach depends on where you are:

Early Prototype → Use RPC or API Providers

- Time to market matters more than cost or flexibility

- User scale is predictable and small

- Data requirements are simple (just balances or recent transactions)

- Team size doesn't support building infrastructure

Production System → Need Data Infrastructure

- Users expect sub-second responses even during peak load

- You need data in multiple formats (APIs, streaming, warehouse)

- Historical analysis is a core feature

- Compliance requires verified data with audit trails

- Team is spending significant time on data plumbing instead of product features

Signs You've Outgrown Your Current Approach:

- RPC bills are unpredictable and spiking

- Rate limits are blocking feature launches

- Engineers spend more time maintaining decoders than building product

- Historical queries take too long or time out

- You can't verify where numbers come from when questioned

- You need data in your warehouse but building ETL is taking months

- Adding new chains means weeks of integration work

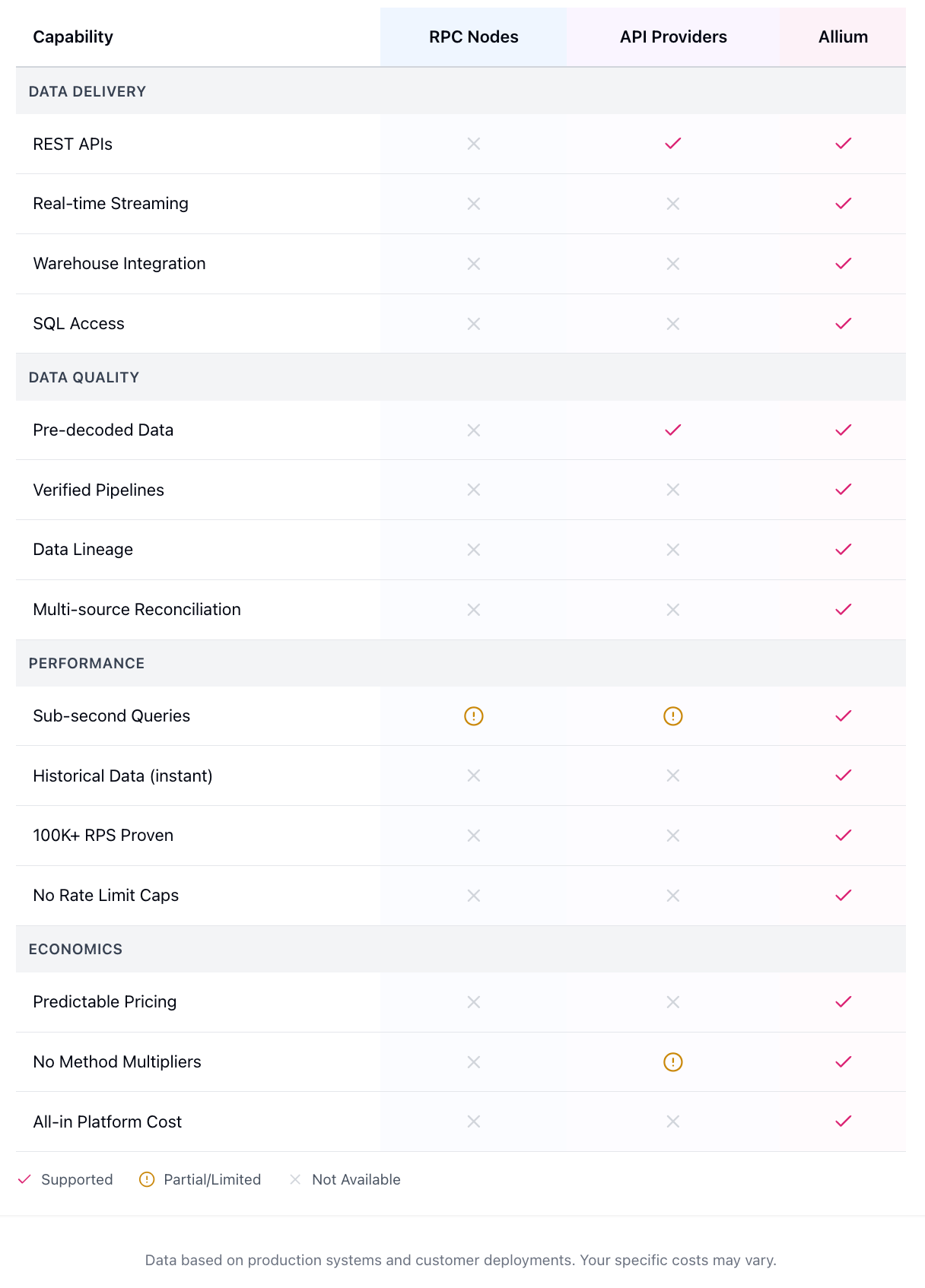

What to Look for in Blockchain Data Infrastructure

If you're evaluating data infrastructure providers, these capabilities separate platforms that work in production from those that don't:

Multi-Method Delivery Can you get data via APIs, streaming, SQL, and warehouse tables? Or are you locked into one access pattern?

Verification and Lineage Can they show you where each data point came from and how it was calculated? Or is it a black box?

Proven Scale Have they handled 100K+ RPS in production? Or do they cite rate limits and ask you to "contact sales" for scale?

Predictable Pricing Is pricing flat and transparent? Or are there hidden multipliers, credit systems, and overage charges?

Coverage and Quality Do they support the chains you need? Does every chain have the same data quality and freshness?

Time to Value Can you get data flowing in days? Or does implementation take months of custom integration work?

Conclusion

RPC nodes and API providers serve important functions in the blockchain data stack. RPC is essential for transaction submission and real-time reads. APIs provide quick time-to-market for common use cases.

But production systems - especially consumer wallets and trading applications that need verified data at scale - require infrastructure beyond what RPC or APIs provide. The question isn't whether to use RPC or APIs, but when your requirements have outgrown what they can deliver.

If your team is spending more time on data plumbing than product features, if your costs are unpredictable and climbing, or if you're hitting rate limits that block growth, it's time to evaluate data infrastructure designed for production blockchain applications.

Resources:

- Allium Documentation - Technical guides and API reference

- Phantom Case Study - 100K RPS during Jupiter airdrop